Technical SEO: A guide to success

Want to brush up on your technical SEO skills? Here’s everything you need to know about technical SEO. Let’s dive in.

There’s no secret that a website needs to be well-optimized for search engines to rank in SERPs. There are multiple factors that enable a website to achieve a higher ranking, and technical SEO is one of them. It is an essential aspect of SEO that has the potential to improve the organic visibility of a webpage.

In technical SEO, nothing is related to the content or its promotion. It is an important, behind-the-scenes aspect that focuses on improving the crawlability and indexing of web pages and enhancing the infrastructure of a website.

Your SEO efforts will not show the expected results, and a website may not appear on SERPs if its technical aspects are not in the proper state. Technical SEO isn’t easy. Therefore, it’s necessary to be well aware of what technical SEO is and how to do it correctly. We have created this step-by-step technical SEO checklist to help you with the technical aspects of SEO.

Let’s get started.

What is Technical SEO?

Technical SEO is a significant part of the whole SEO game. It is the process of improving the technical aspects of a website to enhance its search visibility. The pillars of technical aspects are crawling, indexing, rendering, and website architecture.

This process helps search engines to find, crawl, understand, and index a website more effectively so that it can improve the organic rankings of web pages.

The search engine prefers websites with characteristics such as responsive design, quick loading time, a secure connection, and more. Overall, “technical SEO” refers to the process of optimizing a website in order to improve its ranking on search engine results pages.

Why is Technical SEO Important?

Technical SEO is directly related to the ranking of web pages and their user experience. You can’t rank high on SERPs even if you create strong, engaging, and unique content if your website is technically unfit.

In order to rank web pages, search engines must be able to discover, crawl, and index them properly. Thus, by improving your technical aspects, you ease this process for search bots. Furthermore, technical SEO is not only important for search engines. It’s quite essential for the users as well. A website needs to be functioning properly. It should be fast, clear, and user-friendly.

In order to offer a great user experience, the technical elements of your website, such as page loading speed, mobile optimization, security, and more, must be well SEO optimized.

Thus, technical SEO is for a better experience for both search bots as well as users.

# Understanding crawling

Before going on to the technical elements, it is necessary to understand some basic concepts. Let us explain what crawling is and how crawlers perform it.

What is web crawling?

It is a process of discovering data on web pages by search crawlers or bots to understand the context of the content. These crawlers follow the links on the web pages to discover new content and read it so that they can be indexed and get searched.

How crawling works

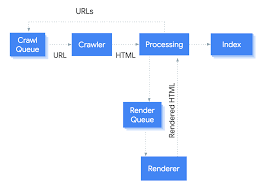

Search crawlers visit page to page by following the links and grabbing the content to understand it. Also, they fetch the links on it to discover new content. There are different elements involved in this process. Let’s find out.

- URL sources

To start the crawling process crawler needs to start somewhere. The crawler starts the process and visits page to page by following links on them and generating a list of URLs. Additionally, Crawlers also follow sitemaps that are submitted by users to enhance the crawling process.

- Crawl queue

It is the list of URLs that is created by crawlers to crawl or recrawl the pages. These URLs are then efficiently crawled by the crawlers or bots.

- Crawler

It is a system that grabs the content of web pages for crawling. These are also known as spiders or bots.

- Processing systems

The system sends the downloaded web pages to the renderer, which loads them for further processing.

- Renderer

It loads the page just like a browser does with JavaScript and CSS files to assess the content and understand the layout of the web page.

- Index

After crawling and understanding the web pages, these are indexed by search engines. Indexed pages are the stored pages that search engines present to users.

How can you control Crawling on your site?

You can control crawling on your site. Following are the ways you can set according to you- what gets crawled and what does not.

- Robots.txt

With the robots.txt file, you basically set crawling preferences for your site. It is a file that informs search engines where they can go on your site and where they can’t. This can help you avoid overloading of crawling requests. For instance, you can restrict your unimportant pages or similar pages on your site.

However, Google may still index that page without crawling if links on your site are directed to that page. You can use the Noindex tag to block indexing or password-protect it to avoid this.

- Crawl Rate

In robots.txt, you can use a crawl-delay directive that allows you to set how often you want crawlers to crawl your pages. Many Search engines support this except Google. For Google, you need to adjust the crawl rate in Google Search Console.

- Access Restrictions

If you don’t want search engines to index your pages but make them accessible to some users, you can do the following things.

- You can use some kind of login system; or

- You can use HTTP authentication for your pages where a password is required for access; or

- You can use IP whitelisting, where you can allow specific IP addresses to access the pages.

You can use these methods for member-only content or staging, testing, or developmental sites. It restricts search engines from accessing and indexing the pages and only allows access to some specific users.

How can you check your website’s crawl activity?

The easiest way to track your website’s crawling on Google is to use the Crawl Stats report of Google Search Console. It gives you full details of your website’s crawling activity.

To check your website’s total crawl activity, you need to access your server logs and use a tool to understand the data better. This may be a complex procedure; however, if your hosting supports cPanel, you can access raw logs and aggregators like Awstats and Webalizer to find the details.

- Crawl adjustments

Each website has a different crawl budget based on how Google wants to crawl and how it can. The pages that change very often or the ones that are more popular are crawled more frequently than the ones that are poorly linked or less popular.

During a stressful situation, crawlers may slow down crawling or even stop it until things get normal.

After successful crawling, pages are rendered and further sent for indexing. These indexed pages are presented per the search queries.

Understanding indexing

After understanding the process of crawling, let us understand what indexing is, what can affect indexing and how to check if web pages are indexed.

What is indexing?

Indexing refers to the process of organizing information with the help of search bots to provide super quick responses to search queries.

For search engines, searching for relevant information for a specific query from a wide pool of information can be a slow and time-consuming process. That’s why search engines crawl and index all pages to supply instant results.

Following are some important points of consideration related to indexing:

# Robots directives

A robots meta tag notifies search crawlers on how they can crawl and index a certain webpage. The tag is placed into the <head> section of a web page to guide search crawlers. For example, the tag here directs search bots not to index the page.

<meta name=”robots” content=”noindex”/>

Your web page can’t be indexed if it is blocked with robots meta tags. If you want to prevent crawling and indexing of certain pages you can you can use robots meta tags.

# Canonicalization

While indexing the web pages, when multiple versions of the same page are present, Google indexes its single version. This is known as canonicalization, and only this canonicalized version is shown in the results. Search engines check a variety of signals to determine the canonical URL, such as canonical tags, internal links, redirects, duplicate pages, and sitemaps.

To ensure that right version is selected from your duplicate content make sure you canonicalise your preferred version. This will help you in control indexing and prevent duplicate issue of your site.

#How to discover your indexed pages?

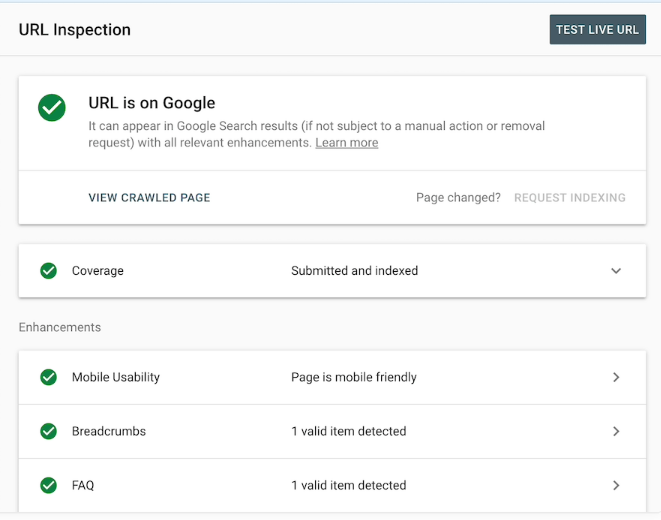

Google Search Console’s URL Inspection tool can help you discover whether Google has indexed your web pages.

Technical SEO checklist

Now that you have understood crawling and indexing, let’s dive into the technical SEO checklist that can boost your search presence.

- Organize your Website structure

- Use a secure connection-Add SSL

- Ensure your site is mobile-friendly.

- Improve your site’s Speed

- Fix Thin and duplicate content.

- Construct an XML sitemap for your website.

- Add structured data to your website.

- Use Accelerated Mobile Pages.

- Fix your website’s Broken links

- Use Hreflang Tag

1. Organize your Website structure

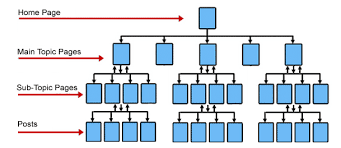

The structure of any website is an important element that can boost or hamper its technical SEO. Site structure is how your web pages are linked and organized on your website.

Generally, most crawling and indexing issues on a site arise due to its poor site structure. If your site structure is good, you likely won’t be facing any crawling and indexing issues. Moreover, it impacts everything you do to optimize your site. Thus, every technical SEO task can be easily achieved with a strong site structure.

-

Use a simple, Organized, Flat Site Structure.

Poor site structure may create orphan pages that are not linked with other web pages on your site. Furthermore, it might be difficult to discover and fix indexing issues on a poorly structured site.

Your website’s structure should be simple, well-organized, and flat, and your web pages should be appropriately interlinked with other pages on your site. This means the pages on your site should be just a few clicks away from each other.

This is because a flat structure makes it easy for search engines to crawl your website efficiently.

-

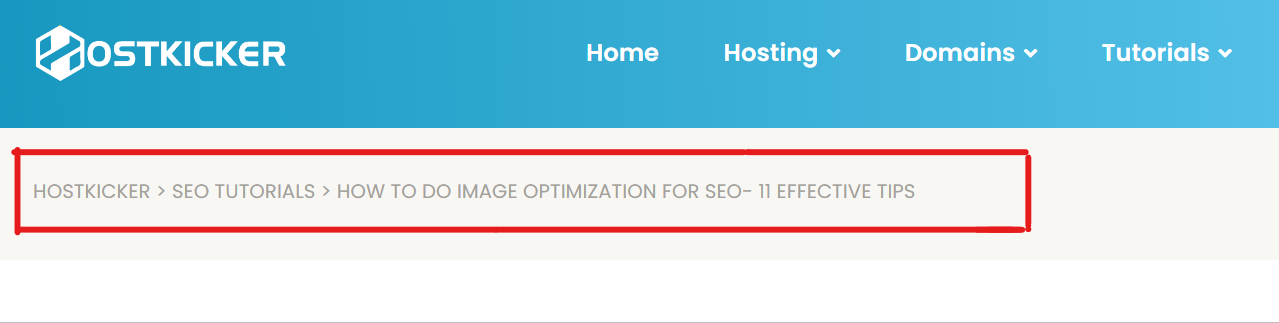

Use Breadcrumbs Navigation

Breadcrumb navigation is a secondary navigation feature that tells the user’s location on a website. It displays the users which pages they have been to and the path they followed to reach where they are. Usually, it is found at the top of the webpage and is SEO-friendly as it automatically adds internal links to categories and subpages on your site and is user-friendly as it allows the user to click and return to any of the previous pages the user navigated through. It is an alternative way to navigate your website and shouldn’t replace primary navigation menus. You can use breadcrumbs navigation for larger websites or those having content arranged in a hierarchical way, and you must not use it for smaller websites with single-level that has no hierarchy.

See the breadcrumb navigation on our blog to visualize what it is.

2. Use a secure connection-Add SSL

Your website URL should be secured with an SSL that encrypts the connection between a browser and a web server. Google announces HTTPS as one of the ranking signals that establish trust with users. Also, Google prioritizes secure URLs over non-secure ones.

If you haven’t shifted from HTTP to HTTPS yet, get it done shortly and boost your ranking. You can get an SSL certificate from your hosting provider easily.

3. Ensure your site is mobile-friendly.

Your website design should be responsive that can automatically adjust itself on any device- mobiles, tablets, desktops, or laptops to offer a great user experience. Since most users use mobile devices to visit any site, Google announced mobile-friendliness as a ranking factor to offer results with a greater user experience.

This means Google checks for the mobile version of your site to rank on SERPs. Therefore, your website should be mobile-friendly to achieve a higher ranking on SERPs.

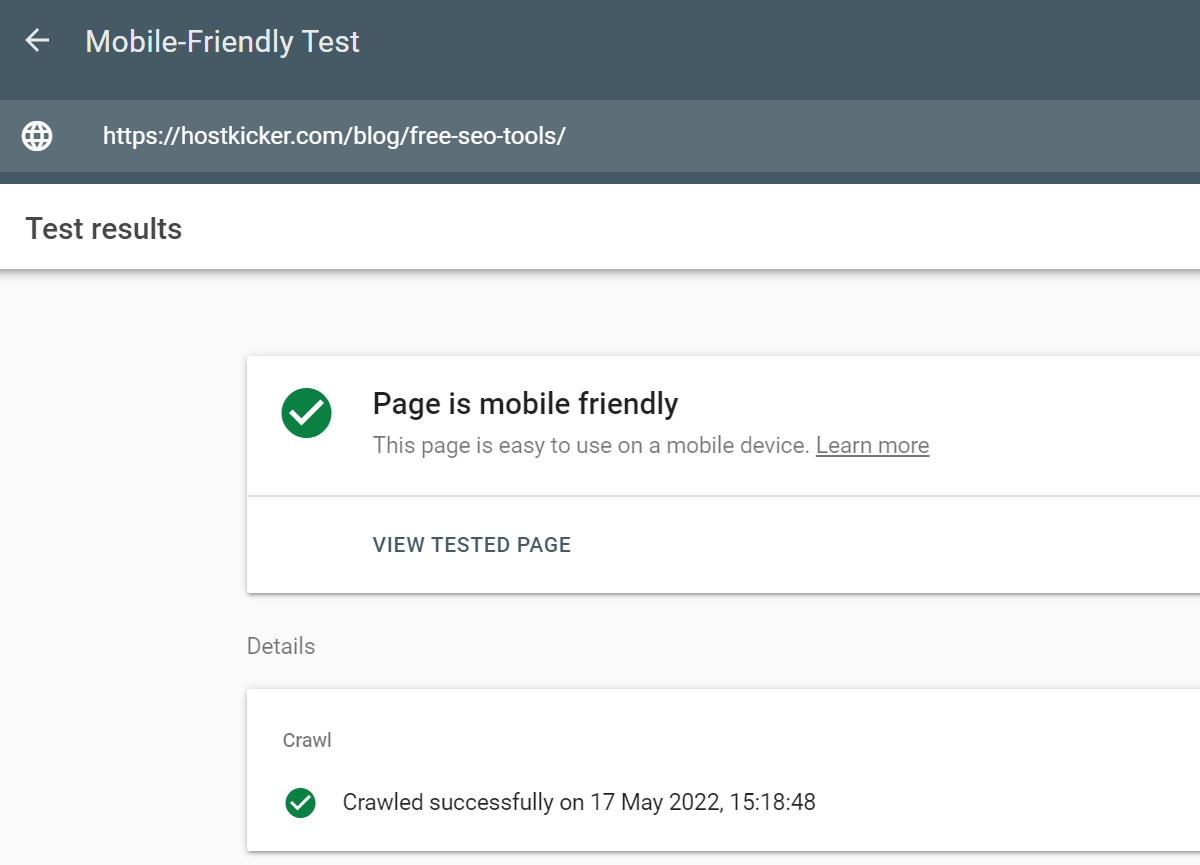

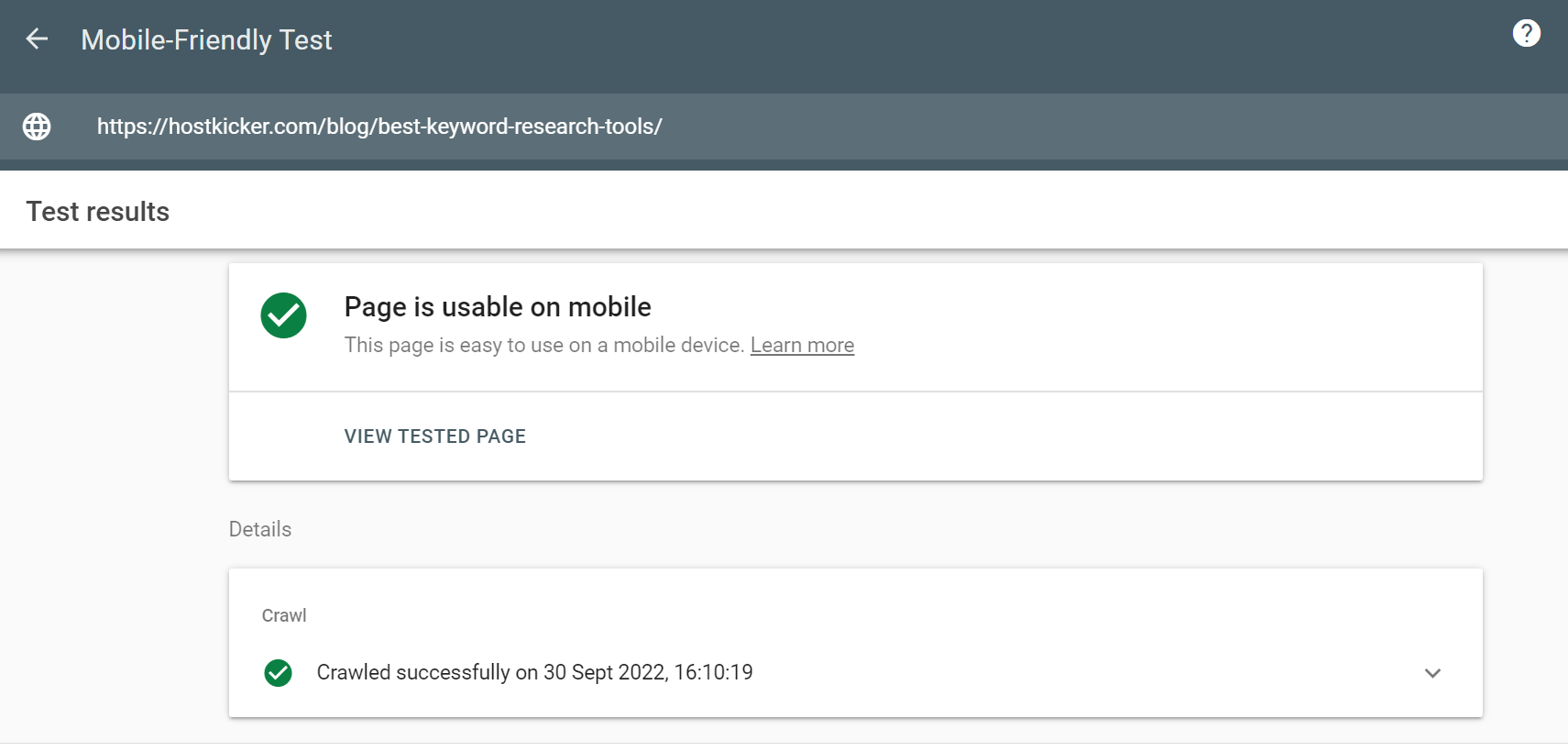

You can use Google’s Mobile-Friendly Test to test your site’s mobile friendliness. Simply visit the site and enter your website’s page URL to check whether or not it’s mobile-friendly.

4. Improve your site’s Speed

Page speed is one of the important technical elements that can impact your website’s ranking directly. Search engines reward the sites that load quickly, as it greatly impacts the user experience on a site. That’s why you must pay attention to how your web pages load and take steps to improve the Speed. You can improve your page loading speed in many ways, such as you can –

- Use a fast web hosting service.

- Remove unnecessary scripts and plugins from your site

- Make sure your images are well-optimized for search engines. Reduce the image size

- Compress your web pages

- Minify your website’s code

- Cache your web pages

- Reduce redirects on your site

- Use CDN

5. Fix Thin and duplicate content.

Thin and duplicate content on your site can affect your website’s ranking. Thin content is one that contains less valuable and irrelevant information that does not satisfy the user’s search intent. Or the ones that do not have proper internal links, whereas duplicate content is the one that is more similar to the other content on your site. Duplicate content can confuse users as well as search engines. Earlier, people used it to manipulate search rankings or enhance traffic to their sites, but search engines are smarter than ever. They can readily detect duplicate and thin content on your site and roll back your SERPs ranking. Either intentionally or unknowingly, your site might be suffering from this issue.

Since Google wants to provide valuable, relatable, and unique information to users, content that provides less or no value to users can hurt your SEO, and you might push down in search rankings. If you previously prioritized the number of contents over their quality, you’ve definitely hampered your technical SEO. Try finding your website’s thin and duplicate content and fixing them to enhance your technical SEO.

You can use canonical tags to show the preferred version of your content or use a redirect to the canonical URL or noindex the less valuable pages.

6. Construct an XML sitemap for your website.

Creating an XML sitemap can have a significant impact on your website’s ranking. It is a file that contains all the details of your website URLs that enable a crawler to crawl your website more efficiently. It is basically like a roadmap of your site. An XML sitemap can be a good move to fix your website’s technicality. Even one of the Google representatives recently stated that XML sitemaps are the “second most important source” to discover URLs. If you haven’t created your website’s sitemap, go get it done quickly, or if you already have it optimize it by updating it. Also, you can set automatic updates for your sitemap when a page is revised, or a new page is added to your site. After creation, you can upload it to the Google search console. You can learn about the XML sitemap more here.

7. Add structured data to your website.

Structured data is a code you add to your site to assist search engines in comprehending content more efficaciously. Using structured data, search engines can index your web content more efficiently to provide more relevant results. Moreover, search engines can use structured data to provide rich results.

Rich results are more compelling and provide useful data relevant to the user’s query. It might contain the content’s title, description, ratings, reviews, products, and pricing.

A study found that rich results enjoy more click-through rates and a lot of traffic. You might appear in rich results by involving structured data in your SEO strategy.

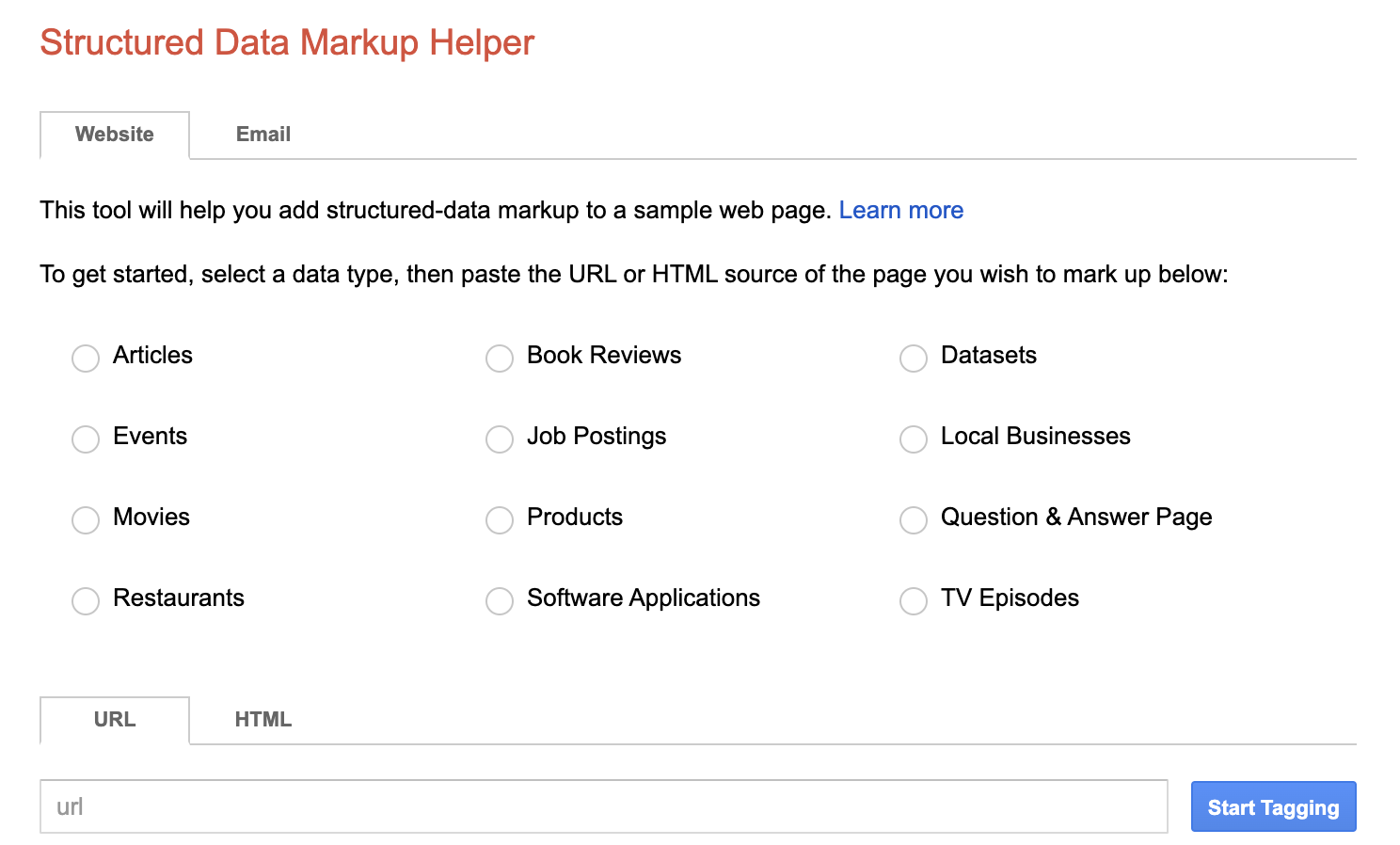

If you are interested in learning details about structured data, you can use Google’s Structured Data Markup Helper.

This tool will help you create structured data for a sample page. Simply choose the content type and add a URL in the given URL column and start tagging. Click on the items to tag and provide the context for each element, as what it’s about.

After tagging all page elements, Google will create structured data for it.

8. Use Accelerated Mobile Pages.

If you are willing to make your website more friendly to mobile users, you can upgrade your pages to AMP. AMP project was created by Google and many others with the goal of speeding up content delivery on mobile devices. It was done using a code-AMP HTML.

Since most users use mobile devices to visit any site, the AMP HTML code allows you to prioritize the mobile-first indexing and help it to load more quickly on mobiles. This might give a boost to your SEO, and you may achieve a higher ranking on SERPs.

Many websites are benefitted from the AMP solution. If you want to boost your page’s loading speed, it can be a good option. However, it is not essential for all your web pages. The pages that are fast enough might not require an update. You just need to optimize those pages for SEO.

9. Fix your website’s Broken links

Broken links on a website can greatly hurt a brand’s standing and affect its SEO. When a user clicks on an internal link on your site to find further details and reaches a dark page with a 404 error, he gets disappointed, his trust in your brand gets shaken, and he might not visit you ever again. This provides a poor user experience. Broken links wash away all your SEO efforts as they restrict the passage of link juice within your site and hamper your SERP rankings. Also, they can affect your site’s crawling. That’s why you must check for broken links on your site regularly. Many SEO tools can help you discover your site’s broken links effectively, such as Semrush, Ahrefs, Screaming Frog, etc. Discover your site’s broken links and fix them to improve your SEO and enhance your site’s user experience.

10. Use Hreflang Tag

You must use the Hreflang tag on your site if it contains multiple versions of a web page in different languages to target audiences of different geographical areas. It is an HTML tag that informs search engines about the various languages of a specific page. This will signify the relationship between the web page and its different language versions. Also, this will help it to show the correct version of the web page based on the searcher’s location.

For example, you are the owner of a business in Germany and now opening its branch in France. Thus, you’ve created a French version of your web page and what it to appear in the results if a person from France visits your website. In this case, you can use the Hreflang tag targeting France to notify Google that if someone is visiting the site using an IP address of France, it should display the French version of the page.

Hreflang tag is important for preventing duplicate content issues on your site and improving a website’s SEO, ranking as well as user experience.

Search engines like Google and Yandex look for hreflang tags, whereas Bing majorly relies on the location, links, and the content-language HTML tag for this.

Tools For fixing your site’s technical SEO

There are plenty of tools in the market you can use to improve your website’s technical SEO.

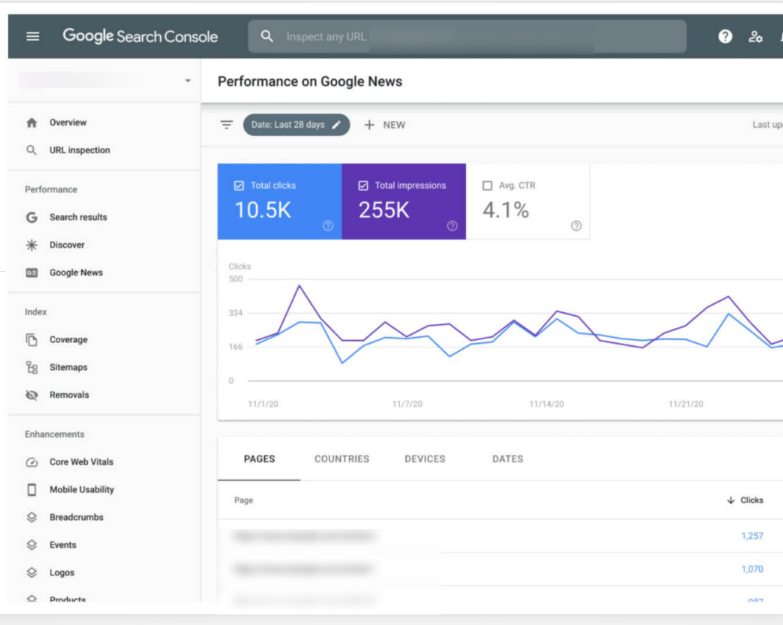

1. Google Search Console

It’s a tool from Google that helps you analyze various website elements and marks the areas for improvement to boost your search presence. It can help you discover and fix technical errors on your site, lets you submit a sitemap, analyze and discover structured data issues, load errors, security issues, index status, crawl errors, and much more. You can also determine the mobile usability of a website using GSC.

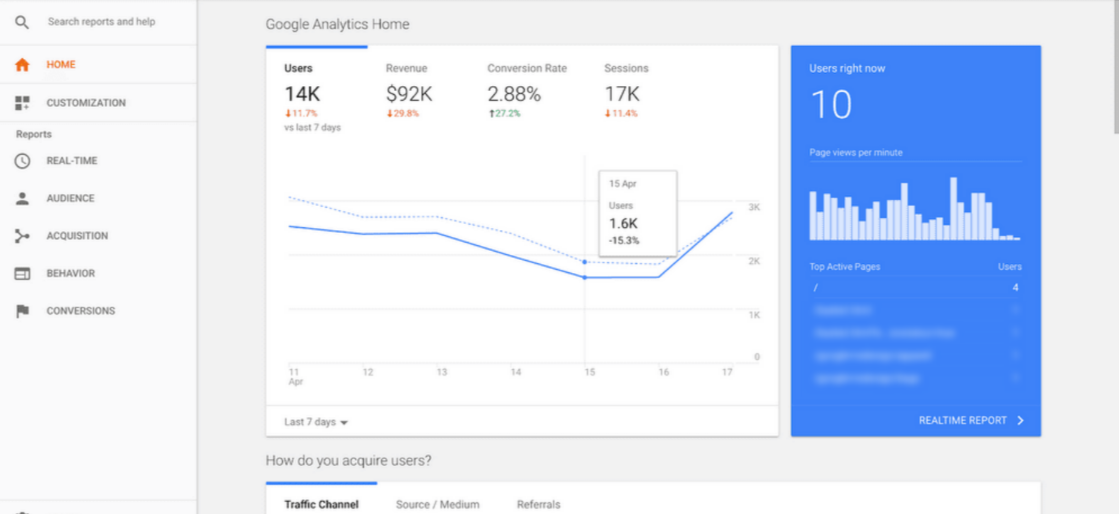

2. Google Analytics

Google Analytics is a strong analytical platform that helps you to assess the organic search performance of a website. Website bounce rate, user behaviour, traffic, conversions, issues, custom reports, and much more. It’s a user-friendly tool that can be integrated with Google search console and others to find detailed website analysis.

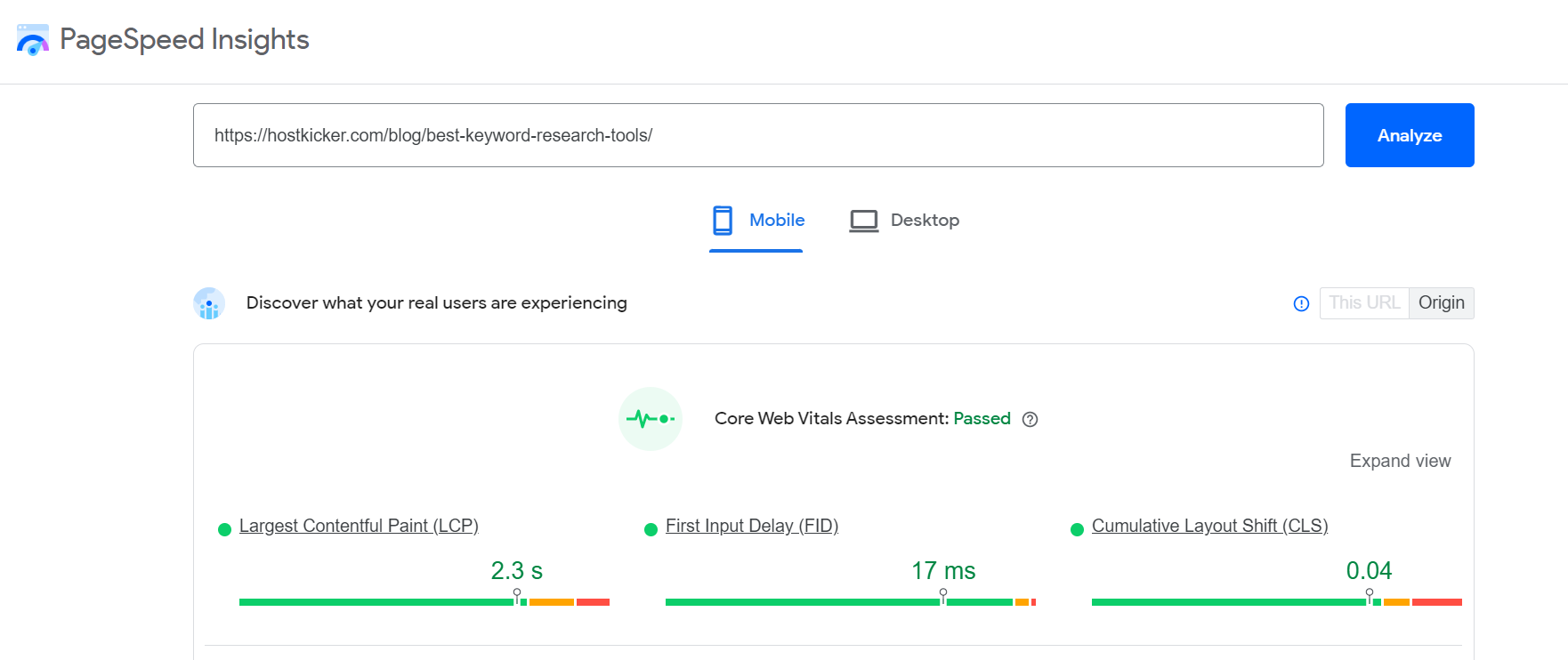

3. Google Page Speed Insights

Google’s page speed insight tool is a free tool you can use to test the loading speed of your web pages. It can help you discover the loading speed for both mobile devices and desktops. Along with the speed score, it also suggests ways to improve the loading speed.

4. Google Mobile-Friendly Testing Tool

It is another tool by Google that helps you to discover whether your web pages are mobile-friendly or not. You can optimize your website for mobiles to enhance its search presence.

We have already discussed the process above.

5. Google’s Schema.org Structured Data Testing Tool

This Structured Data Testing Tool from google is a simple tool that performs only a single function and functions well. It can test your website’s Schema structured data markup next to the Schema.org data supported by Google to discover any issues with your Schema coding. This will help you easily discover the issues and fix them in handy.

6. Screaming Frog

Screaming Frog is a crawler that can also help you discover major SEO issues on your site.

It can identify broken links, thin content, error codes, URL errors, canonical errors, or errors with the website’s structure.

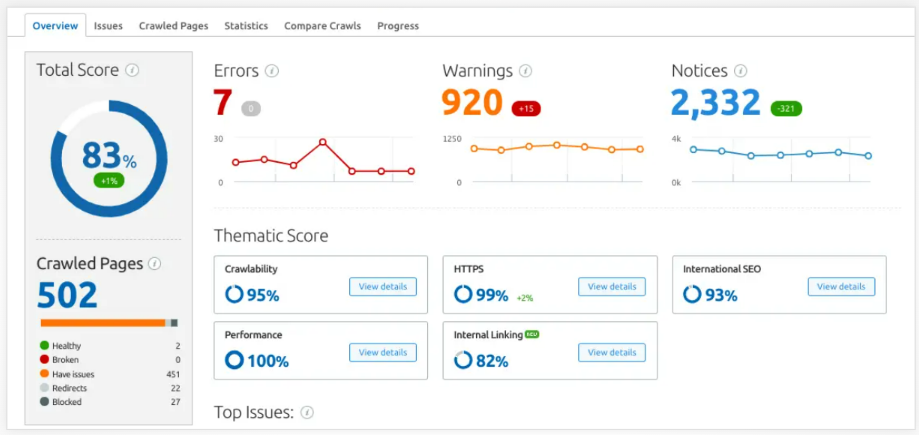

7. SEMrush Site Audit Tool

Semrush’s site audit tool is an all-in-one technical SEO tool that can help you dig out the issues such as the site’s loading speed, crawlability, security issues, internal links issues, content issues, AMP implementation, JS and CSS errors, and much more. You can use it to discover your website’s issue effortlessly and fix it to boost your search presence.

Its other tools are potent as well that can help you improve your SEO.

8. Ahrefs

Ahrefs is another powerful technical SEO tool that can help you elevate your SERP rankings. It can help you discover plenty of SEO issues on your site to suggest areas for improvement. It can help you detect the errors in your site performance, such as slow loading pages, large HTML or CSS codes, duplicate and thin content issues, issues with Hreflang tag, site structure issues such as orphan pages, inbound links and outbound links issues, Image issues, broken pages, and much more. Use it to dig out the errors on your site and mend it to boost your technical SEO and online presence.

Its time to fix your website’s technical SEO

You may now have a basic understanding of technical SEO, including what it is, how to fix it, and the many technical SEO tools available to you.

Technical SEO is a skill that takes time to develop; it can’t be achieved in one or two days. It necessitates a lot of research and trials. There may be errors, failures, or successes.

It consists of various elements that you can check and optimize for SEO to make sure search bots crawl and index your website efficiently without any issues.

It’s important to improve your site’s technical SEO since it allows search engines to better comprehend your site by making it stronger, improving website architecture, easier to navigate, and faster to load. Technical SEO can assist you in improving your search ranks, increasing traffic to your site, and rewarding you for your efforts.

Perform your site’s audit using the tools such as Ahrefs or Semrush to find the SEO errors as discussed above, and follow the technical SEO checklist to brush up on your website’s SEO to make it faster.

We hope this has helped you comprehend all about technical SEO.