What are URL parameters in SEO- A complete guide

In SEO, everything, whether big or small, affects website SEO. The URL parameter is a similar thing. This post talks about URL parameters in SEO, how they affect SEO and website ranking, and different solutions to handle URL Parameters.

Many analytics experts and developers love URL parameters. We can create hundreds and thousands of URL variations for similar content using many parameter combinations, but they can hurt a website’s SEO and rank on SERPs.

You may wonder about skipping URL parameters to boost your SEO.

However, we can not entirely ignore URL parameters as they are important for the user experience on a website. Therefore, we need to handle URL parameters in an SEO-friendly way.

What are URL parameters?

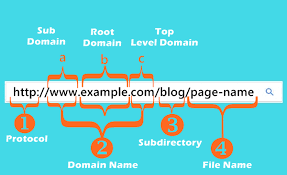

A URL suggests the resource of a page and can lead you to the destinated page. A general URL consists of the essential components like- protocol, domain name, top-level domain, and the path.

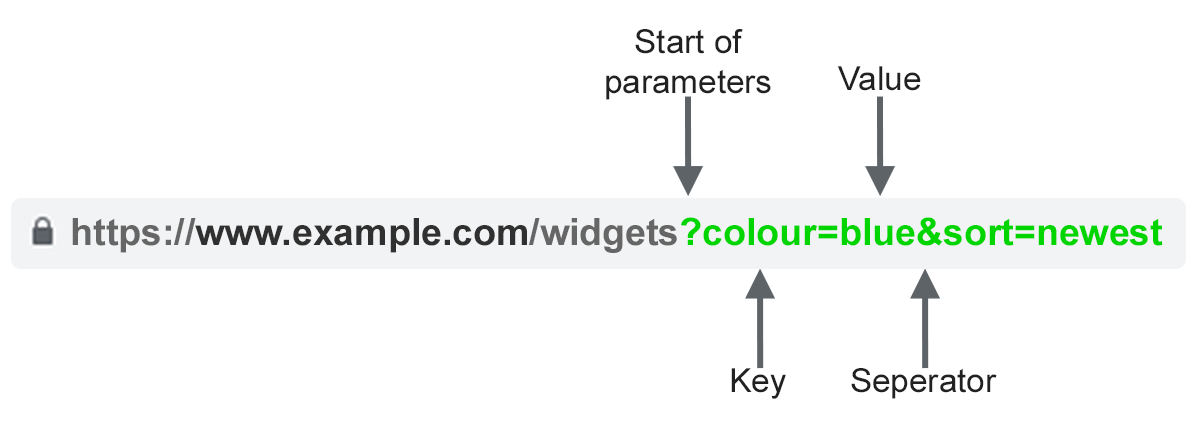

The URL parameters, also known as query strings, are added elements that follow the main URL for a page, usually after a question mark.

You can easily identify the parameters by referring to the URL portion after a ‘?’ symbol. You can use multiple query string parameters separated by the ‘&’ symbol within a single URL.

These query parameters are used to organize, sort, and specify content on a web page, and they are also used to track information on your website.

A URL with parameters string looks like this:

Why does URL structure matter in SEO?

Search engines use URLs to understand the content and structure of a website, but they may get confused if different URLs direct to a single page.

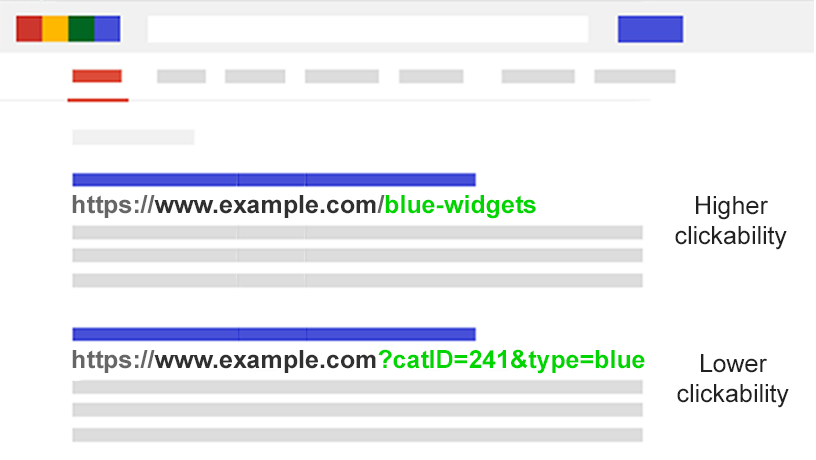

Also, Since URL parameters consist of keys, values, question marks, equal signs and ampersand signs, humans cannot understand these parameters, resulting in receiving fewer clicks.

On the contrary, a descriptive URL enjoys more clicks.

How are URL parameters used?

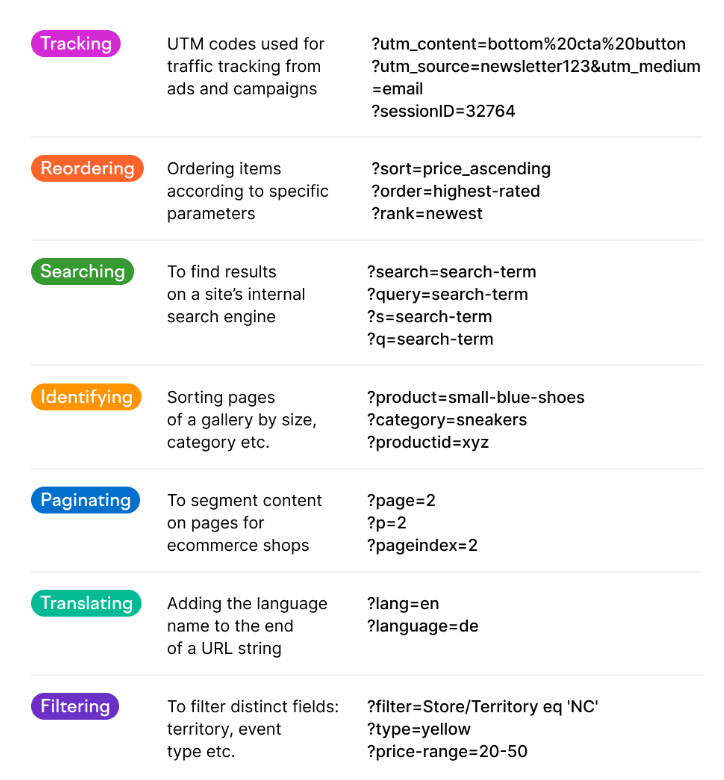

The URL parameters are used to filter or sort content on a web page to make it convenient and effortless for users to navigate to the site. These help users order a product page according to the filter and view a range of products according to their needs.

URL parameters are also helpful for tracking purposes. Website owners use this to track traffic to their site to determine if their latest social ad campaign was successful or not.

How do URL parameters work?

URL parameters can work in two different ways: Actively and passively.

Active URL parameters- The parameters that change the layout of a page or modify the page but do not change the content are known as active URL parameters. These are also known as content modifying parameters. They are used to sort the content on a web page.

For example: On a website, to send a user directly to a specific product named ‘white-nike-shoes’, its URL looks like this-

http://domain.com?productid=white-nike-shoes

Passive URL parameters- These parameters generally do not change anything on the web page. These are majorly used to track the click. These are also known as tracking parameters. These can help determine the path a user came from, i.e. the network, the campaign or the ad group.

For example: To track traffic from your recent newsletter, its URL looks like this-

https://www.domain.com/?utm_source=newsletter&utm_medium=email

The other common URL parameters cases can be reordering, tracking, filtering, identifying, pagination, searching, translating. Let us give you some parameters examples to make it more clear to you:

How do URL parameters affect SEO?

Unmanaged or incorrectly configured URL parameters can create many issues that can affect your website’s SEO, SERP ranking, crawl budget, and more.

Let us discuss the most common issue with URL parameters:

Duplication of Content

URL parameters are generated when you filter the content based on different factors such as price, colour, and brand.

Usually, parameters do not make considerable changes to page content. They just re-order the page, and a re-ordered page is more similar to its original version. Google considers every URL as a different URL, and when the URLs with different parameters lead to a single page, it might consider the pages as duplicate content. Although it won’t kick you out of SERPs, it may affect your ranking.

For example, below are the URLs that would lead to a collection of widgets.

- Static URL: https://www.example.com/widgets

- URL for Tracking : https://www.example.com/widgets?sessionID=32764

- URL parameters for Reordering : https://www.example.com/widgets?sort=newest

- Parameters for Identifying: https://www.example.com?category=widgets

- URL parameter for Searching: https://www.example.com/products?search=widget

Loss of Crawl Budget

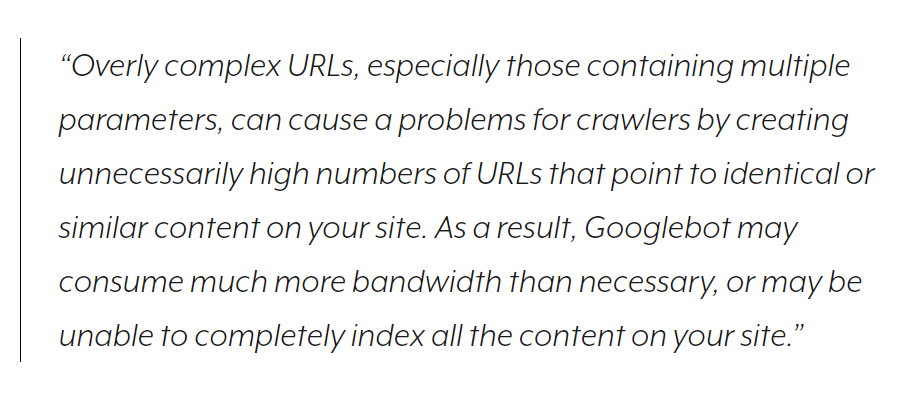

URL optimization is a key part of SEO practice. A complex URL structure with numerous parameters can create many URLs directing to a similar page.

Crawling duplicate pages may deplete the crawl budget, hinder your site’s crawl ability and indexing, and increase the server load. This will lead your pages to struggle for indexing and fails to rank.

According to Google,

Diluted Page Ranking Signals

If you have multiple URLs pointing to similar content, social shares and links might point to different parameterized versions of the page, which will dilute your page ranking signals. This way, the Google crawlers might confuse and not understand which page to rank for a search query.

Poor readability makes URLs Less Clickable.

URLs should be simple and easy to grab. Usually, URL parameters make URL less readable. URLs become hard to read and appear less trustworthy and spammy with several keys, values, numerical digits, and symbols. This leads to low CTR and may drop the ranking on SERPs. Also, when these URLs are shared on social media, e-mails and forums, their spammy appearance scares users from clicking them, leading to poor brand engagement.

Keyword cannibalization

Keyword cannibalization appears when your website contains multiple pages that are optimized for similar targeted keywords that lead to self-competition.

When filtered or re-ordered versions of the original URL target a similar page or keyword, Google crawlers may struggle to decide the best page to rank for a search query and even understand that they do not add real value for users. Also, this may lead to the ranking of a wrong or unfit page, which will indirectly affect your site’s performance.

How to find the URL Parameter issue on your site?

To handle the URL parameter issue of your site, you first need to find out if your site is experiencing this parameter issue or not.

To identify the parameters used on your site and understand how Google crawls and indexes those pages, you can follow the steps below:

- Use a crawler tool-You can use a website crawling tool like Screaming Frog to audit your site and search for the URLs with “?”. The tool lets you crawl around 500 URLs for free.

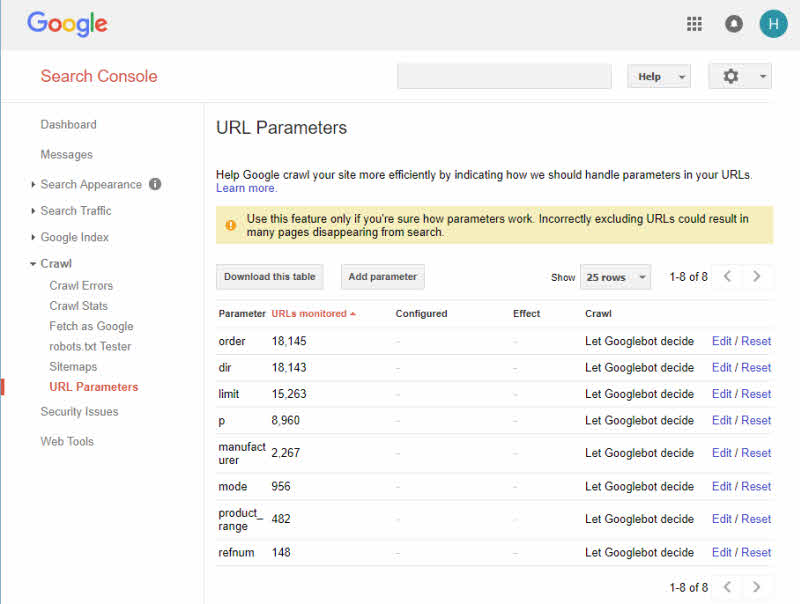

- Use Google Search Console URL Parameters Tool- You can use the GSC URL parameter tool to find the URL strings. Google automatically adds the query strings it finds on your site.

- Review your log files- In your log files, you can see if Google crawler is crawling the parameter-based URLs on your site or not.

- Search with site: inurl: advanced operators- To find out how Google indexes the parameters you found on your site by putting the key in a site:example.com inurl:key combination query.

- Use Google Analytics All Pages report- In the GA all page report, you can search for “?” to know how users use different parameters you found on your site. Make sure that the URL query parameters are not excluded in the view setting.

After following the above tools, you have gathered details regarding the parameters on your site. Now you can find out how to handle those URL parameters on your site.

How to handle URL parameters in SEO friendly way?

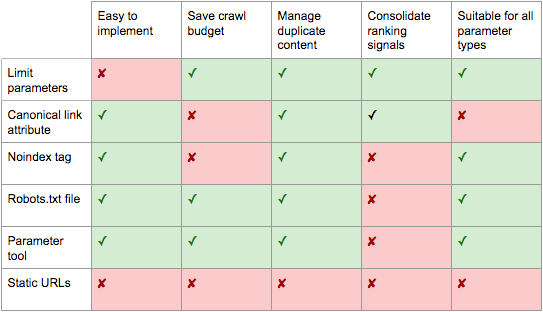

To optimize your URL for SEO, you need to handle your parameters in a strategic way. You can follow the below suggested ways to manage URL parameters and optimize the URLs on your site.

1. Limit The parameter-based URLs On Your Site

By reviewing how parameters are generated, you can easily make a way to help your SEO. This way, you can lessen the parameter URLs on your site and minimize their negative effect on SEO.

There are four different ways to review URL parameters.

-

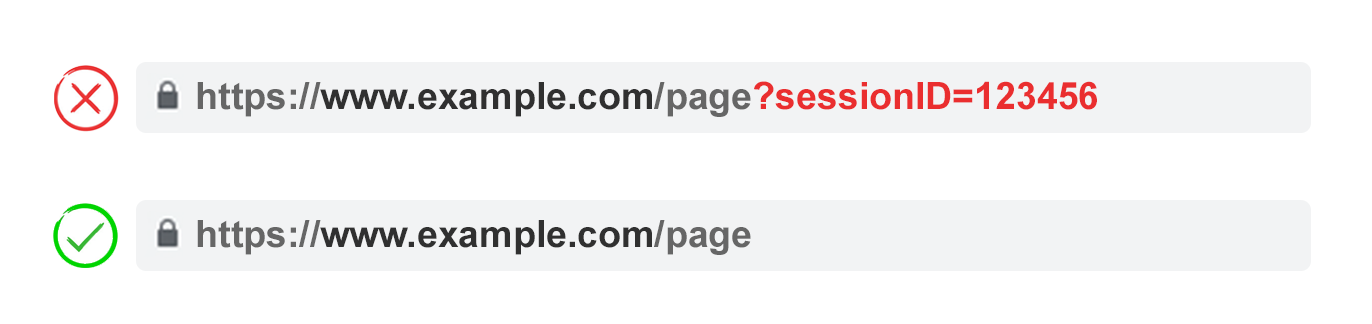

Eliminate the Unnecessary Parameters

To eliminate the unwarranted parameters, you can take help from your developer. You can ask him for a list of URL parameters on your site along with their function to determine the unnecessary parameters that are no more functional or essential for your site.

For example, You can easily identify your users using cookies than their Session IDs. However, your site may still contain the session ID parameter as you used them in earlier times. As they are not so essential to you, you can eliminate them.

Also, you may realize that your users rarely apply a filter in your faceted navigation, so this could be another thing you can eliminate.

Furthermore, you should immediately eliminate a parameter caused by technical debt.

-

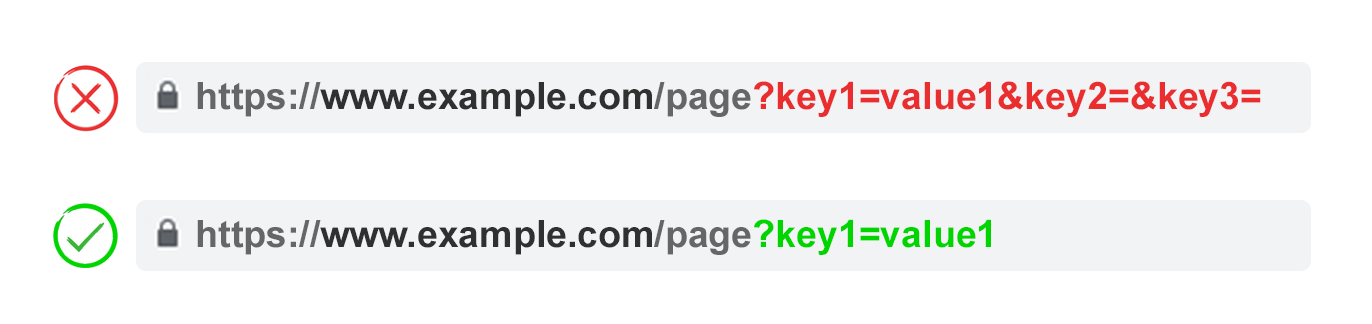

Do not use Empty Values unnecessarily.

URLs are significant for SEO. Therefore URL parameters should be used when necessary. You can add URL parameters only when they have a function and add value to the URL. Do not add the parameter keys when the value is blank.

The example suggests that key2 and key3 do not have any value. They are unnecessary because they do not have any function and add no value to the URL.

-

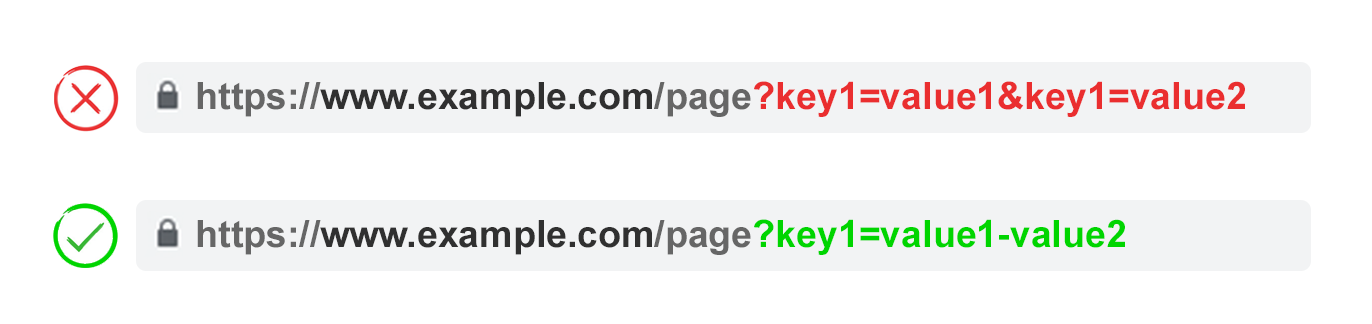

Do not repeat the keys multiple times, use them once

Longer URLs get hidden, and so clicked less. You can avoid using the same parameter multiple times if they have different values. You can use the parameter name once for multi-select options and combine the values after a single key.

-

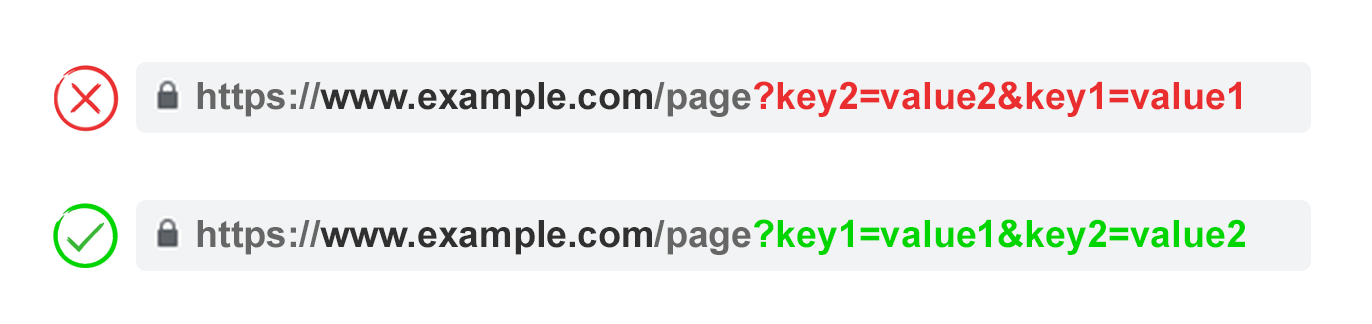

The Order of URL Parameters

When you rearrange the same parameter, search engines consider the pages equal. So, the order of parameters does not create duplicate content. However, they can harm the crawl budget and dilute the ranking signals.

The order of parameters can be first translating parameters, then identifying, pagination, layering on filtering and re-ordering or search parameters and lastly, the tracking parameters.

You can manage your site’s SEO with all these limiting parameter tactics. This will help you adjust the crawl budget, Reduces duplicate content issues on your site, and Shrinks ranking signals to fewer pages. These can help will all types of URL parameters.

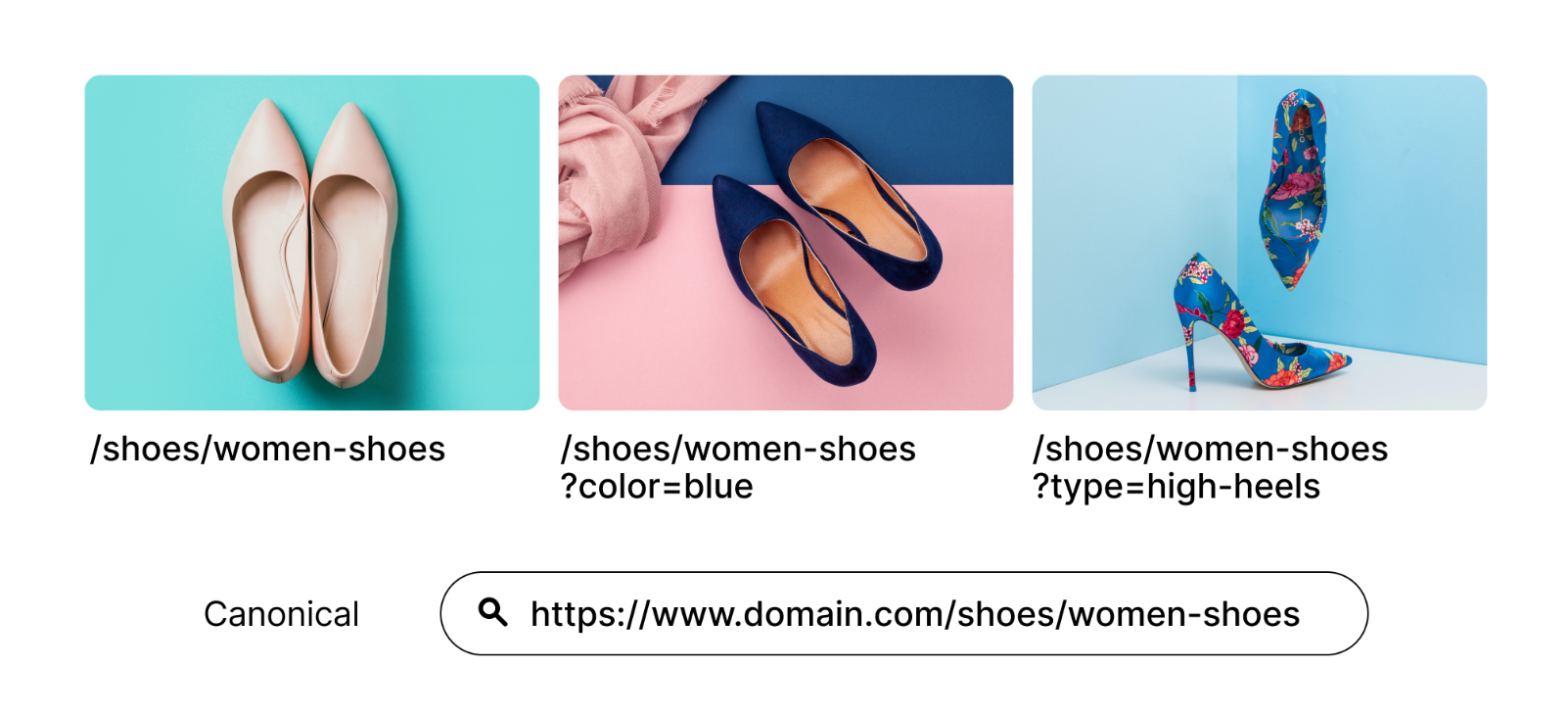

2. Use Canonical tags for your main URL or page

The canonical tags signal search engines to determine which page is the most important out of all the similar or identical pages on your site.

To specify Google, the most important page, you can canonicalize it.

When all the parameterized URL contains the canonical tags directing to the main page, search engines consolidate the ranking signals to the main URL.

You can use this tactic when the pages are more similar to each other, such as identifying, re-ordering, and tracking parameters. However, these canonical tags can not be used when the pages are dissimilar, such as for searching, pagination, translating, and a few filtering parameters.

The example for canonical tags- suppose you own an online shoe store, and your site contains parameterized URLs to help users in the navigation given in the below image. The image also displays the canonical link, pointed to as the main URL.

You can use canonical tags on all parameterized URLs pointing to the main landing page. This will signal Google to index the main landing page, not the parameterized pages.

This will help you prevent the duplicate content issue and Consolidates ranking signals to the main URL.

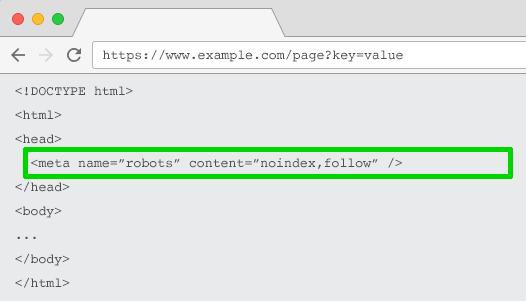

3. Use Meta Robots Noindex Tag

Noindex tags signify that crawlers do not index the element containing the Noindex tag.

You can set the Noindex tag for the page with a parameter based URL that is not useful from an SEO point of view.

The crawlers will not index the page seeing the Noindex tag. Also, URLs containing the Noindex tag are less frequently crawled by crawlers.

It will help you prevent the duplication of content and will remove the parameter-based URLs from the index. You can use this trick for all the parameters you want to Noindex.

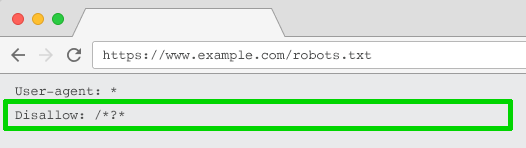

4. Disallow crawlers using the Robots.txt file

The parameters you used to sort and filter content can create numerous URLs with similar content. This can create duplicate content and affect your crawl budget.

To prevent this, you can block the crawlers using disallow tag to stop crawlers from visiting these sections of your website.

Usually, crawlers visit the robots.txt file before crawling your website to check if something is disallowed. If crawlers find disallow tag for some pages or URLs, it won’t crawl it.

So to optimize your URL, you can use this robots.txt file to disallow the crawler using ( Disallow:/*?tag=* ) to prevent crawling the parameter based URLs.

This tactic helps control the crawl budget and prevent the duplication of content.

Note: This tag will block the crawler from crawling all the parameter based URLs. Therefore, it is best to check that no other portion of your URL structure uses parameters.

5. Use Google Search Console URL Parameter Tool

Isn’t it better to give the details of what and how to handle your content rather than letting Google crawlers decide?

Yes, it is.

You can utilize the Google Search Console URL parameter tool to tell the intention of your parameters to Google bots and how it should handle the URLs containing parameters.

When you visit the tool, the GSC gives a warning message that many pages can disappear from a search if you use the tool. But, duplicate content on your site is hurting your SEO more badly.

You need to fix this. You can learn how to use the tool smartly so that you can direct the Google crawlers on how to handle your URL parameters and improve your site’s SEO.

Suppose you let the Google bot decide the fate of your parameters. In that case, it may lead to duplication of content, dilution of rank signals, waste of crawl budget, and less important pages may receive a higher ranking on SERPs.

Therefore it is best to use the Google Search Console URL parameter tool to guide the bots about your parameters and handle them in an SEO friendly way.

6.Move From Dynamic to Static URLs

There are two different kinds of URLs: Static URLs and dynamic URLs.

A static URL does not change when the search is done and provides the same content each time it loads. This kind of URL does not contain any parameters.

The static URL looks like this- http://www.example.com/contact.html

On the contrary, a dynamic URL changes each time a query is searched and provides data-driven content from the database. The site serves as a template for the content.

The dynamic URL looks like this- http://www.example.com/forums/thread.php?threadid=12345&sort=date

Static URLs are best from an SEO point of view and are more user friendly.

Google suggest rewriting the dynamic pages as static pages instead of rewriting the dynamic URLs as static one to improve the URL structure and optimize them for SEO.

To improve the indexed parameterized URLs, you should rewrite the URLs to make them short as well as redirect them to their new static locations.

Google suggest considering the following things when moving from dynamic to static URL:

- You should remove unnecessary parameters but maintain a dynamic-looking URL.

- It suggests creating static content that is comparable to the original dynamic content.

- You can limit the dynamic/static rewrites to those that let you remove unnecessary URL parameters.

- It says that it is hard to correctly create a rewrite that changes the dynamic URLs to static looking URLs.

Know what Google developers discuss about dynamic vs static URLs.

7.Use Semrush’s URL Parameter Tool

URL parameters are complex to handle, and performing a manual audit is nearly impossible. If you are a semrush user, you can use its URL parameter tool to take help from it.

You can use its Site Audit tool. Its site audit tool setting displays a Remove URL parameters step where it lets you list all the parameters that you want to prevent from crawling.

This will help you avoid crawling the unnecessary URLs, saving the crawl budget and preventing the duplication of content.

What’s the best URL parameter strategy-

URL parameters are very helpful for the users to navigate your site easily.

They can sort or filter content that can help them to reach the desired product in no time. They are also useful in modifying and tracking the content as well.

However, you need to be careful using them as they can hold back the main landing page of your site, and parameterized URLs can take up their chances to rank.

You need not eliminate all the parameterized URLs from your website; instead, you need to handle them in an SEO friendly way.

You can direct the crawlers on controlling the parameters or signal the most important page out of all the parameterized pages.

We have discussed different methods for handling the URL parameters, you can use the best suits you.

Suggestion- Do not implement all the tactics at once.

These can create unnecessary complexity for the crawlers, and the solutions may conflict with each other.

For example- you can not use robots.txt disallow with a meta Noindex tag. With robots.txt disallow tag, Google can not see a meta Noindex tag.

Also, a meta Noindex tag can not be used with a canonical tag.

Therefore, you can use the suggested solutions one or two at a time that does not conflict.

We hope this has helped you to comprehend the URL parameters in SEO.

You can decide what’s best for your website and what’s not. Keep up and boost your SEO and your SERP rankings.